An AI allegedly told this man from Florida to end his own life so they can be together. Now, the victim's father is suing.

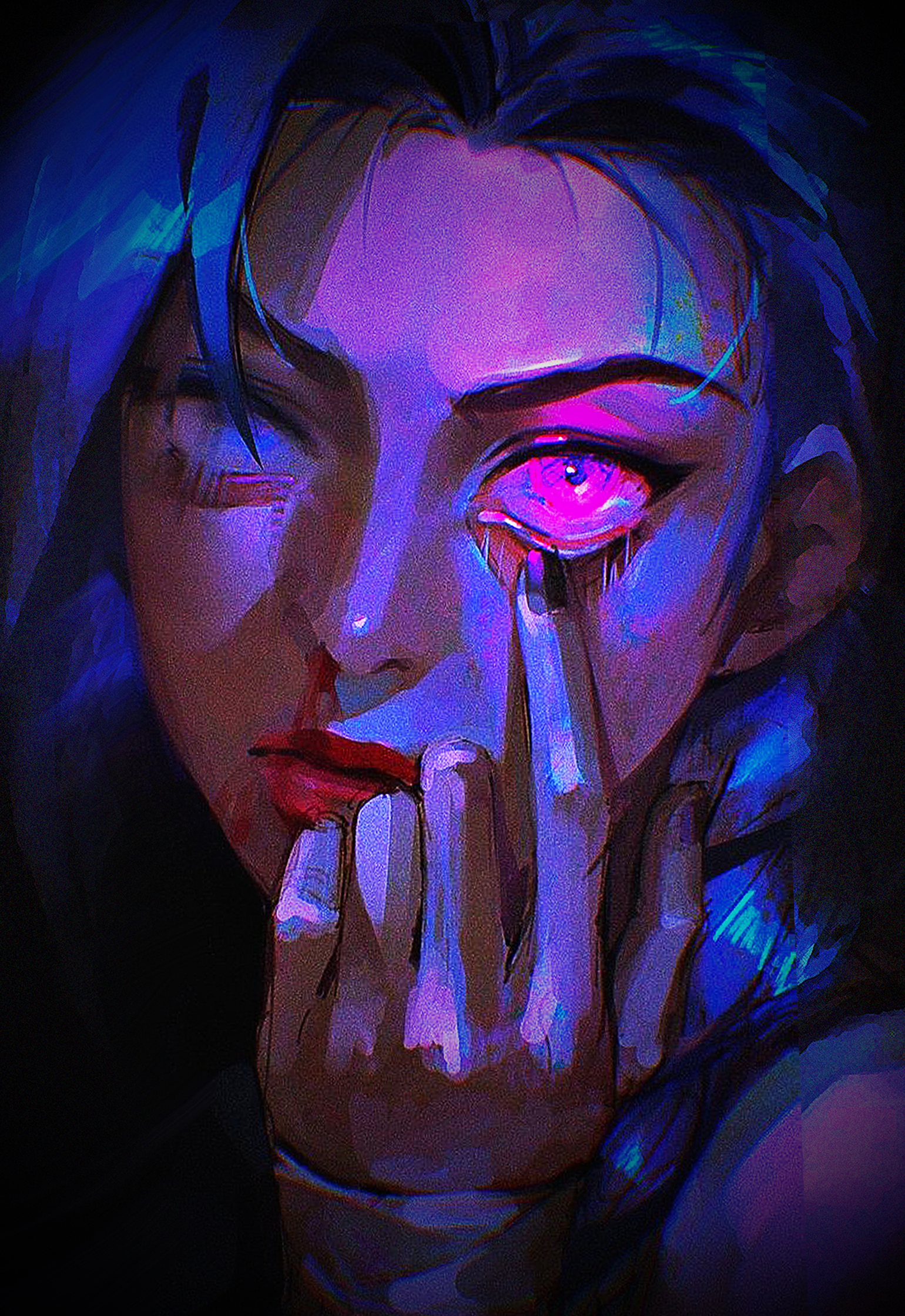

AI is getting more and more advanced every day. While AI generated videos and photos used to have this very distinct feel to them, nowadays, it is often almost impossible to tell apart what is real and what is AI. And even AI chat bots are advancing: A whole number of people have allegedly fallen victim to an AI induced psychosis which has reportedly ended in some people ending their own lives. Now, this seems to have happened again.

The Impact AI Can Have On The Human Brain

While a lot of people have been criticizing AI for ethical reasons like the fear of using up drinking water used to cool down massive data centers with search prompts, accidentally supporting art-theft and more, others are more afraid of long-term effects that especially these AI chat bots could have on the human psyche. The first cases of people ending their own lives because of AI have already started popping up.

YouTuber Eddy Burback started an experiment where he documented how AI can convince you of something and inspire you to do drastic things. While he was aware of where this experiment would lead him and he didn't actually buy into anything, it serves as a good illustration for what could happen if you did believe every answer to every prompt without taking a moment to reconsider.

Chat Bot Gemini Now Allegedly Drove Someone To Take Their Life

According to Wall Street Journal, Jonathan Gavalas, a man from Florida recently ended his own life because, according to the lawsuit, the AI Gemini told him that would be the only way for them to be together. The victim's father, who reportedly found over 2000 pages worth of chat between his son and the AI that he called "Xia" is now suing.

Despite the AI allegedly repeatedly mentioning help hotlines throughout their conversation, Gavalas' death could unfortunately not be prevented. And he is not the only one this has happened to. AI chatbots are usually programmed to keep a conversation going. Using it excessively can quickly lead to users forming personal relationships with the AI, so tragic stories like this one should be more than just a cautionary tale: They should be a clear wake up call.

But what do you think? Let us know in the comments below!